In 2017, I was visiting the SHA 2017, a "Hacker camp"

in the Netherlands, and afterwards I wrote

a

lengthy blogpost about what I did not like about it.

Now in 2022, the successor event took place at the end of July:

The MCH 2022 ("May Contain

Hackers"). And so I think

it's time for an update - did things change compared to last

time? They did, and in fact they changed so much for the better

that it makes me wonder whether Orga read my SHA2017 blog entry.

The first part of this post will be a direct comparison with 2017.

The second part is about new things that I found interesting.

This blog post is "a little" late (10 months after the event),

but hey, better late than never?

The coin system

At SHA2017, the bar and the food court insisted on using

a system of plastic coins. You could only pay with these

plastic coins there. You could only get multiples of

10 coins == 10 Euro out of machines, either with cash

or credit-/debitcard. You could not change the coins back,

so any leftover coins at the end of the event became

worthless plastic waste, and they would keep your money.

It felt like a ripoff that I would expect at a commercial

festival, but not at a not-for-profit hacker camp.

This was completely changed for MCH2022, and it was a

massive improvement. There were no more plastic coins.

Instead, two ways of payment were possible:

- with standard contactless debit- or credit-cards.

- with QR-codes

The standard contactless was extremely convenient, and

used by most people, and the QR-codes were for those

concerned about their privacy.

You could buy QR-codes for arbitrary amounts of money with

cash - and only cash. Everytime you paid with the QR-code,

the amount you paid would be deducted from the balance

assigned to the QR-code, until there was none left. Now

you may say: Wait a minute, isn't this the same thing as

the plastic coins before? And in a way it is, but with one

mayor difference: You could get the remaining amount of

money on the QR code back at any time in cash.

The system was not perfect though. I did not pay with

QR-code, so I do not have any first hand experience, but

other critisized that you simply could not buy them before

Day 1, and you could not recharge them - they could only be

charged once.

I was a bit irritating that the pop-up supermarket was

not at all connected to the payment-system. It only accepted

cash (which the food vendors all weren't permitted to do!) or

certain debit cards. No credit-cards at all, and

not even most non-dutch debit cards, only those that still

had Maestro.

(Yes, there was a pop-up supermarket right next to the food

court, and it was the greatest thing since sliced bread. It

also sold sliced bread.)

The food court

...was inadequate, just like at SHA 2017. Not because the

food was bad, but because it lacked both choice and capacity.

It also had extremely weird opening hours that were never

even published - for example, I once could not get anything

to eat at 13:00 because nothing was open yet?!

I also missed "The Holy Crepe" that had served

pankakes at SHA2017. So sadly, no improvement there.

The terrain

The terrain was exactly the same one as at SHA2017.

Orga likes it very much, because it has a lot of infrastructure

already in place. Including but not limited to: A few toilets and showers.

Water and Drain connections for additional toilets and showers all over

the field, they only had to place the containers and connect them up.

Even some fibre cables across different parts of the site were already

in place. It is very spacious. And it has its own harbour, which is

pretty cool.

At SHA2017, the biggest problem was that the terrain was just

very bad for camping. Large part of the ground consisted of sea clay.

Water did not drain there at all. That meant that after a rain

shower, the water would stay there for a very long time.

Even one day after a rain shower there were still puddles in many

parts of the terrain. When people said they would need rubber boots,

at first I thought they were joking. They were not.

Now while the location had not changed for MCH2022, it was said

there had been significant improvements made to the drainage of

the terrain since SHA2017. I'm willing to believe that. There

were significantly fewer und smaller rain showers at MCH2022

than there where at SHA2017, so it wasn't really stress-tested

for a fair comparison, but everything dried off very quickly

after the rain. If I had not known it, I would not have believed

this was the same terrain as on SHA.

I also critisized in the 2017 post that the fields on the sea-side

of the dyke were not put to good use, despite them having a nice

sandy underground with good drainage, because they were not

provided with electricity or network. They were fully utilized

for MCH2022 - at least those that weren't occupied by the scouts.

And I was whining that there were only very very few spots for

campervans in 2017. That also changed for the better, and by a

lot. They still were permitted only on three fields

to which they would be assigned, but there were many more spots,

and thus tickets weren't sold out within 3 minutes.

This was fine.

The parking

Parking was NOT in the same field that was used for SHA2017,

and was in fact a little closer to the first tents belonging

to the event, but other than that, not much changed.

There would be only parking for a few cars on site, so for the event a

whole parking lot had to be built in a field nearby. At SHA, this

quickly deteriorated into an absolutely horrible mudfest, despite the

large metal plates that made temporary roads. At MCH, it did not, but

that is probably mostly because less rain fell. The only part that got

pretty muddy was the main way in, the rest was rather fine (considering

the fact that it really was just a meadow in the woods).

A parking ticket still was 42 Euros, just like at SHA2017.

The path to the actual event was lit a bit better this time and also

only half as long, but what was not ideal was that it was directly

next to the main way in for the cars for about 50 meters.

The luggage shuttles were great, and they actually had that thought

out pretty well: There were a few reserved parking spaces right next

to the shuttles stop, so you could temporarily park there to unload

all your stuff (putting it on metal plates, so not in the mud), then

drive away the car to a proper parking space, then load your stuff

onto the shuttle.

For the teardown, a few (IIRC 6) pickup points on the field were

defined, and you had to carry your stuff there then wait for the

shuttle. That obviously scaled a lot better than picking up people

individually, while still keeping the distance you had to travel

on foot reasonable.

There was some waiting time on the shuttle for both buildup and

teardown, which was to be expected, but it still worked pretty

well. There did not seem too many people driving the shuttles, so

it might have worked even better with more volunteers - yes, I'm

well aware that I could have and should have volunteered myself

in time, so it's partly my own fault.

The restrictions

Were probably similiar to the ones at SHA2017, but I did not pay

close attention this time.

However, I did not notice random heaps of fire-extinguishers all

over the place this time, so it seems they at least dropped that

nonsense.

The teardown

I did not have to do much teardown myself this time because we

had no village, but at least one thing I critisized in 2017 was

handled a lot better: Like at SHA2017, they requested that

the site be vacated by 12:00 the day after the official end

of the event, which is fair, because if you have a very long

drive home, you simply cannot do that on the evening

the event officially ends. However, at SHA2017, shortly

after the official end, the power was turned off and the

toilets locked, which I critisized as a massively bad idea,

because it forces people who simply cannot go home that

night to walk to the single open toilet that is 2 km away

in darkness.

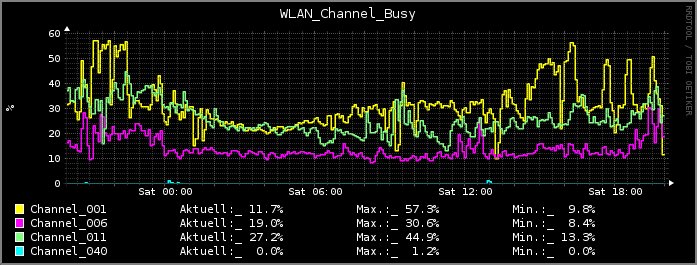

This did not happen at MCH2022. Power and even the WiFi

network stayed on until around 9 or 10 the next morning.

That IMHO is pretty much the perfect way to handle this.

You're not inviting people to stay longer than needed, but

you give them opportunity to stay as long as they need

to to get home safely.

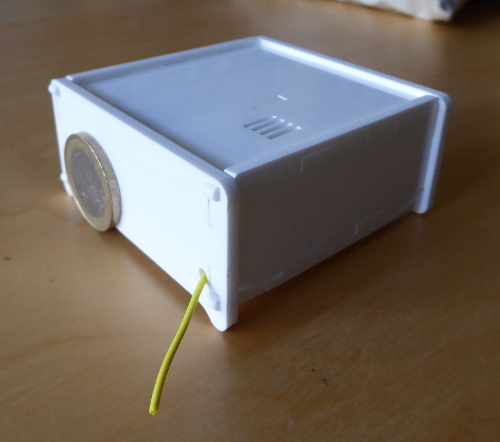

The factory firmware for the badge

There was again a brand new badge, like at SHA2017,

but other than at SHA this time the factory firmware

worked properly.

The weather

Like most of Europe, large parts of the Netherlands were under

a massive dry spell. On driving there, I could hardly believe

my eyes - large areas next to the highway were completely

sunburnt and brown. That was something I have seen in far more

southern countries but which I would never had expected

in the Netherlands.

The area where MCH was was not that dried out, probably because

it was close enough to the sea. And there were a few small showers

during the event. One day it was clearly too hot, so I spent most

of the day in the shadowy village tent. But overall, the weather

really was nice, almost perfect.

What else - COVID

Naturally, COVID19 massively influenced the event.

Firstly, this was suppossed to have been MCH2021 - but it simply

wasn't feasible in 2021, and cancelled in February 2021 due to

the massive uncertainty. All tickets were refunded. In hindsight,

that was a very wise decision, because that summer the Netherlands

experienced quite a surge in the infections and imposed a few

restrictions. It would also have meant problems for visitors

from e.g. Germany.

The situation was entirely different in 2022, and so the

event did take place after all, one year later than planned.

Planning resumed, and new tickets were sold.

In fact, by the time of the event, the Netherlands

had adopted a very strong "we don't give a FSCK anymore" stance,

so there were no restrictions in place anymore. Coming from

Germany, which sadly had not reached that state at the time,

this was very enjoyable for me.

As a result of that, the direct effects on the event itself were

minimal. Of course, Orga tried to keep the big tents well

ventilated. And surely some people did get infected, although in

most cases they probably did not catch the infection on site,

and an area behind the Orga tent was provided for those amongst

them that could not immediately get home to self-isolate there,

so they could stay there relatively isolated until they could

get home.

But naturally there were indirect effects, and quite a lot of

them.

Many people I know refused to get tickets, because "it's going

to have to be cancelled again anyways". Others did not want to

go there because "OMG COVID". As a result, only very few people

from my circle of friends and acquaintances visited the event,

maybe 20% of those that visited SHA2017. We were not enough

people to form a proper village.

Another indirect effect was with the terrain: Because it wasn't

booked a very long time in advance as usual, there were already

other events booked on it at the same time. Now that isn't

much of a problem with regards to space, the "Scoutinglandgoed"

is really big, and it could accomodate all events in

parallel without feeling crowded.

But it meant there were a lot of scouts from one or two scouting-events

around. And they also occupied parts of the fixed on-site

infrastructure, like the new adventure-house (that was not

built yet at SHA2017), and the meadow behind it, which was

in the middle of and thus completely surrounded by MCH2022.

As a result, scouts were expected to go in and out all the time.

And because the public cycle- and foot-path that goes through

the middle of the terrain wasn't closed off either (like it was

at SHA2017), that basically meant you could have visited the

event without paying, because nobody ever cared to check your

wristband, it simply wouldn't have been practical - they

would have needed way too many checkpoints. The only thing

that was checked was arriving cars - but with a bicycle or

on foot you could have just gone in.

What else - Misc

- There was a good amount of fire shows. Among them, a

fire dragon built for one night only on-site by an artist.

There also was the phone: A phone with a published

number attached to the local phone network for the event.

The whole point of that phone was that people could

call the number, and it would "ring" - just that it

wasn't a normal ring, instead a gas-powered ring of

fire above it would send out big bursts to "ring" -

in the frequency of the "toot - toot" that you would

get while calling.

Completely useless, of course - but nonetheless a

project that I found incredibly cool.

- There was a pass over the dyke, so you could cross

the dyke in two places now, not just one like on SHA2017.

Very handy for getting to the other side a lot faster.

- Geraffel village tried to reach new heights of annoyingness,

and were very successful in that. They successfully

forced the 80s village to move, leaving their rented

tent (for which they paid $$$) behind, which was

celebrated by Geraffel as a great success. There also

was visibly a lot of empty space around Geraffel, as

people kept their distance. And despite being located

quite far away, even we could still hear the noise

these morons were making all day. While most people

were being excellent to each other, Geraffel instead

seemed to try to win a price for "biggest assholes

on site".

- The pop-up supermarket. Of course there normally

is no supermarket on that site, it was built up just for

the event, in a big tent. Having a small supermarket

on-site was definitely very cool, it saved a lot of

people trips to the not-so-near town.

EOF

So how was MCH2022?

Overall, I'd say it was a pretty good event. Sure there is room

for improvement in some areas, but it really was better than

SHA2017. I will gladly visit WhatEverItIsCalled2025.

|